Table of Contents

- Key Takeaways

- 1. Start by defining what counts as an AI incident

- 2. Assign ownership before the incident starts

- 3. Build a response workflow with clear decision points

- 4. Set severity levels based on business and regulatory impact

- 5. Prepare the evidence trail you will need later

- 6. Test the plan with realistic scenarios

- What a strong AI incident response plan should include

- Final thoughts

AI systems fail in ways that traditional software does not. They can expose sensitive data, produce harmful or inaccurate outputs, violate policy, trigger regulatory scrutiny, or create operational disruption at scale. If your organization is deploying AI in customer support, internal workflows, finance, compliance, or product features, you need an incident response plan designed for AI-specific risk.

The challenge is not only technical. An effective response requires security to investigate, legal to assess exposure, and operations to keep the business running while the issue is contained. Without a shared plan, teams lose time deciding who owns the problem, what to say, and what to shut down.

This article breaks down a practical way to build an AI incident response plan that is clear enough to use during a real event and flexible enough to fit your organization’s tools, workflows, and regulatory obligations.

Key Takeaways

- AI incidents should be treated as cross-functional events, not just security tickets.

- Your plan needs defined triggers, owners, escalation paths, and approval rules before an incident happens.

- Security, legal, and operations each play a different role in containment, disclosure, and recovery.

- Not every AI issue requires the same response; severity levels should map to business and regulatory impact.

- Tabletop exercises are one of the fastest ways to find gaps in your plan.

1. Start by defining what counts as an AI incident

Before you can respond consistently, you need a shared definition of an AI incident. That definition should go beyond security breaches and include failures that create business, compliance, or reputational risk.

Examples of AI incidents

- Exposure of sensitive data through prompts, logs, or model outputs

- Hallucinated or incorrect outputs used in a decision-making workflow

- Prompt injection that causes the model to ignore instructions or reveal data

- Unauthorized model behavior, such as generating prohibited content

- Vendor model outage that disrupts a critical business process

- Bias, discrimination, or other harmful outputs that create legal exposure

The point is to establish a common threshold for escalation. A minor performance issue may only require engineering follow-up. A high-impact hallucination in a customer-facing workflow may require immediate legal review, customer communications, and a containment decision.

2. Assign ownership before the incident starts

AI incidents break down quickly when ownership is unclear. The response plan should name a primary incident owner and specify how each function participates.

| Team | Primary responsibility | What they need in the moment |

|---|---|---|

| Security | Investigate the technical cause, preserve evidence, contain the issue | Access to logs, model configuration, vendor details, and impact scope |

| Legal | Assess regulatory, contractual, privacy, and disclosure obligations | Facts, timestamps, affected data types, and customer impact |

| Operations | Maintain service continuity and coordinate business decisions | Clear guidance on what to pause, reroute, or disable |

| Product/Engineering | Fix the issue and validate the remediation | Reproduction steps, severity, and change-control authority |

In practice, security often leads the investigation, but that does not mean security decides everything. Legal should control external disclosure language. Operations should decide how to preserve customer service while the incident is contained. Product and engineering should own the technical fix and follow-up controls.

3. Build a response workflow with clear decision points

A strong AI incident response plan works like a checklist, not a paragraph of policy. It should show who does what, in what order, and at which decision points approval is required.

A practical workflow usually includes five stages:

- Detect: identify the issue through monitoring, user reports, audits, or vendor alerts.

- Classify: determine whether the event is a security, privacy, legal, operational, or reputational incident.

- Contain: disable affected features, restrict access, rotate credentials, or pause model usage if needed.

- Investigate: collect logs, prompts, outputs, policy settings, and vendor communications.

- Recover: restore service, communicate updates, and implement preventive controls.

Each stage should have a clear owner and a time expectation. For example, a high-severity AI incident may require initial triage within 30 minutes, legal review within one hour, and a containment decision within the same business day.

Where approval should be mandatory

Some actions should not be taken by engineers alone. Examples include customer notifications, public statements, retaining external counsel, shutting down a revenue-generating AI feature, or notifying regulators. Predefine the approval chain so the team is not improvising during a crisis.

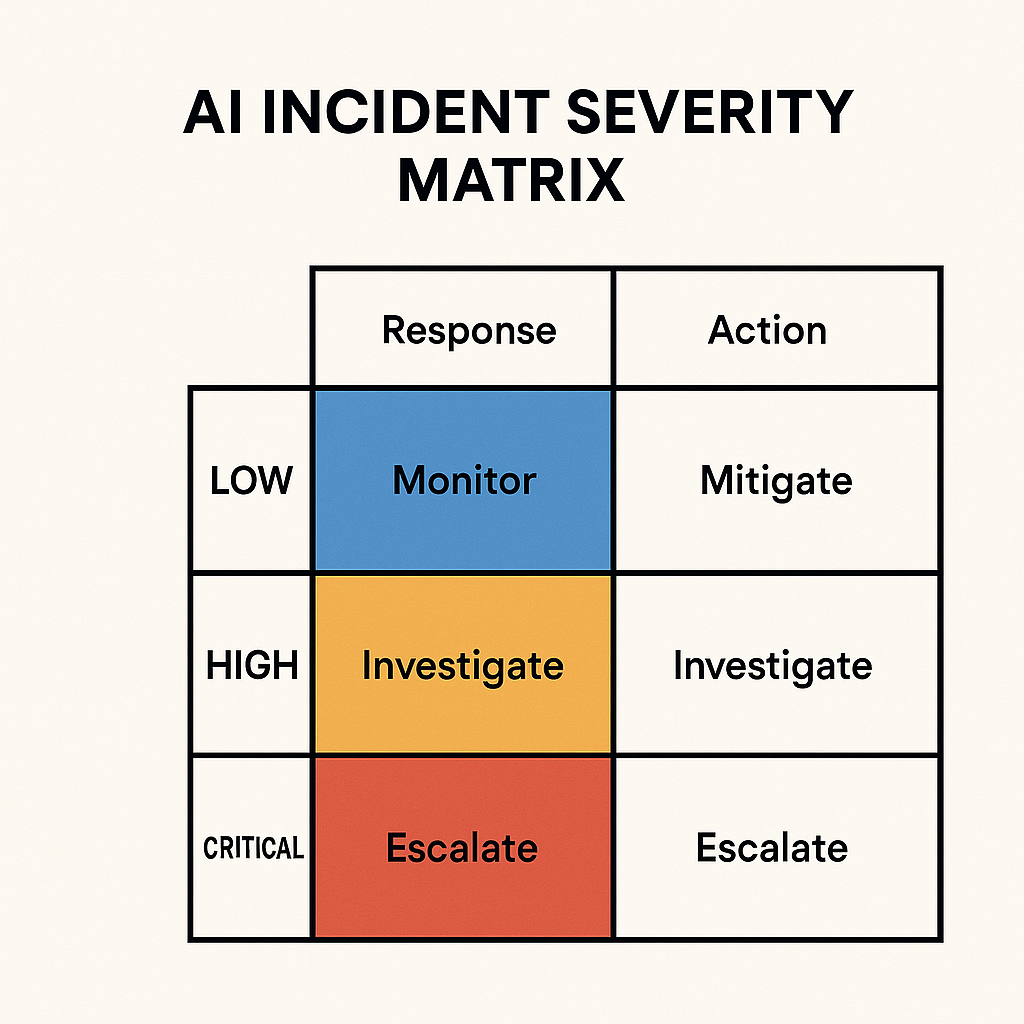

4. Set severity levels based on business and regulatory impact

Not all AI incidents deserve the same response. A useful severity model considers both the technical issue and the downstream impact.

Here is a simple way to think about it:

| Severity | Typical trigger | Response posture |

|---|---|---|

| Low | Minor output error with no sensitive data or customer impact | Track, patch, and review internally |

| Medium | Noticeable model failure affecting a limited workflow | Contain, investigate, and document the incident |

| High | Exposure of confidential data, harmful output, or service disruption | Immediate escalation to security, legal, and operations |

| Critical | Material regulatory, contractual, or reputational risk | Executive involvement, external counsel, and formal communications |

Your severity criteria should reflect the systems you actually use. An AI model in an internal writing tool may be lower risk than the same model embedded in a credit decisioning workflow or medical support product.

5. Prepare the evidence trail you will need later

In an AI incident, investigation speed matters, but so does evidence quality. If you cannot reconstruct what happened, it becomes harder to determine root cause, assess impact, or defend the organization’s response.

At minimum, your plan should define what to preserve:

- System and application logs

- Prompt and output records, subject to privacy and retention rules

- Model version, configuration, and deployment history

- Access control logs and admin actions

- Vendor status messages and support correspondence

- Timestamped incident notes and decision records

Work closely with legal on retention boundaries. Some records may need to be preserved for litigation or regulatory review, while other data should be minimized to reduce privacy exposure. The goal is to retain enough evidence to understand the incident without creating a second problem through over-collection.

6. Test the plan with realistic scenarios

An incident response plan is only useful if people can follow it under pressure. Tabletop exercises help teams practice their roles and expose weak points in the workflow before a real incident happens.

Use scenarios that match your actual AI use cases. Good examples include:

- A customer support chatbot leaks account information after prompt injection

- An internal assistant gives incorrect compliance guidance to employees

- A vendor model outage disrupts an automated approval workflow

- An AI feature generates discriminatory output in a regulated process

During the exercise, watch for delays in decision-making, unclear escalation paths, missing logs, and confusion over who can approve containment or disclosure. Capture those gaps in a follow-up action list and assign owners with deadlines.

What a strong AI incident response plan should include

If you are building this from scratch, the plan should be short enough to use and detailed enough to matter. A solid version usually includes the following components:

- A definition of AI incidents and severity levels

- Named owners for security, legal, operations, and engineering

- A step-by-step response workflow with escalation thresholds

- Evidence retention and documentation requirements

- Notification rules for leadership, customers, regulators, or vendors

- A testing schedule for tabletop exercises and periodic reviews

You do not need a separate document for every AI use case. But you do need enough specificity that teams know how to respond when the incident affects a customer-facing product, an internal workflow, or a regulated decision process.

Final thoughts

AI risk becomes much easier to manage when the response process is designed in advance. A good incident response plan does not eliminate failures, but it reduces confusion, shortens response time, and helps each team act with confidence.

Start with clear definitions, assign ownership, set severity thresholds, and test the plan in realistic scenarios. If security, legal, and operations can work from the same playbook, your organization will be far better prepared for the first serious AI incident that comes its way.