Table of Contents

- Key Takeaways

- 1. Start with the data the tool will touch

- 2. Understand how the vendor uses your data

- 3. Review access controls and technical safeguards

- 4. Check compliance claims against actual requirements

- 5. Evaluate incident response, business continuity, and support

- 6. Put the review into contract terms

- Final checklist before approval

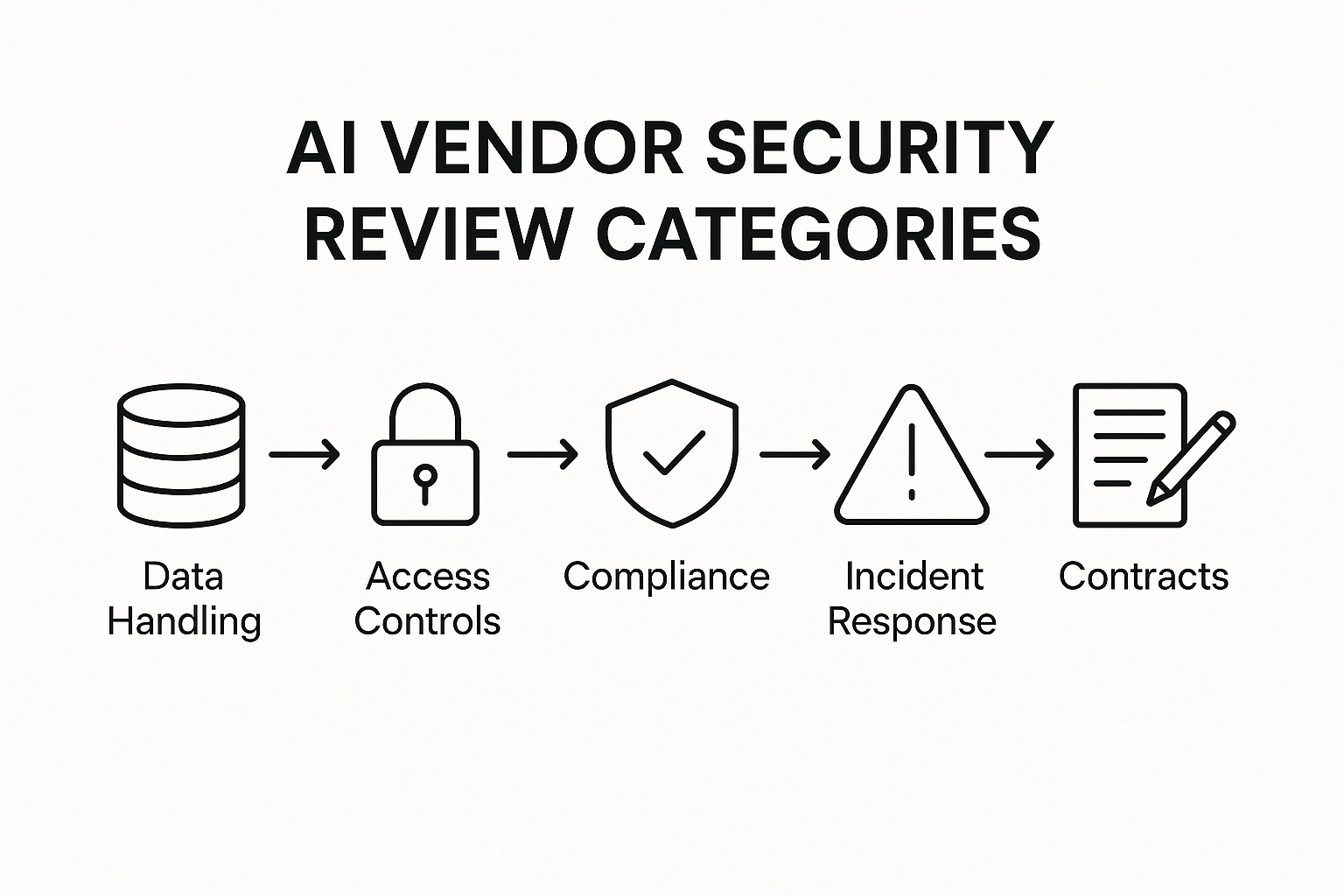

Adopting an AI tool can create clear business value, but it also introduces new security, privacy, and governance questions. The vendor may process sensitive customer data, store prompts and outputs, rely on third-party model providers, or use your inputs to improve their service. If those issues are not reviewed early, the convenience of a new tool can become a long-term risk.

A useful AI vendor security review is not about blocking innovation. It is about making sure the business knows what data is leaving the organization, who can access it, how it is protected, and what happens when something goes wrong. The goal is to move from assumptions to evidence.

Key Takeaways

- Start with data flow: know what the AI tool collects, stores, shares, and retains.

- Ask whether vendor data is used to train models, and whether you can opt out.

- Review access controls, encryption, logging, and incident response before approval.

- Check the vendor’s compliance posture, but verify details instead of relying on badges.

- Put obligations in writing through contracts, security addenda, and data processing terms.

- Reassess the vendor regularly, especially if the tool expands or the vendor changes policies.

1. Start with the data the tool will touch

The first question is simple: what data will the AI vendor receive from your business? That includes prompt text, uploaded files, user profiles, metadata, conversation history, and any system context the tool can access. In practice, this is often broader than teams expect.

Security reviews should classify the data by sensitivity. A brainstorming assistant that never sees customer data is different from a support tool that processes personal information, payment details, or internal documents. If the vendor will handle regulated or confidential data, the review needs to be stricter.

Useful questions include:

- What data types are required for the tool to function?

- Can the business limit what users submit?

- Does the vendor retain prompts, outputs, or uploaded documents?

- How long is retained data kept, and can it be deleted on request?

2. Understand how the vendor uses your data

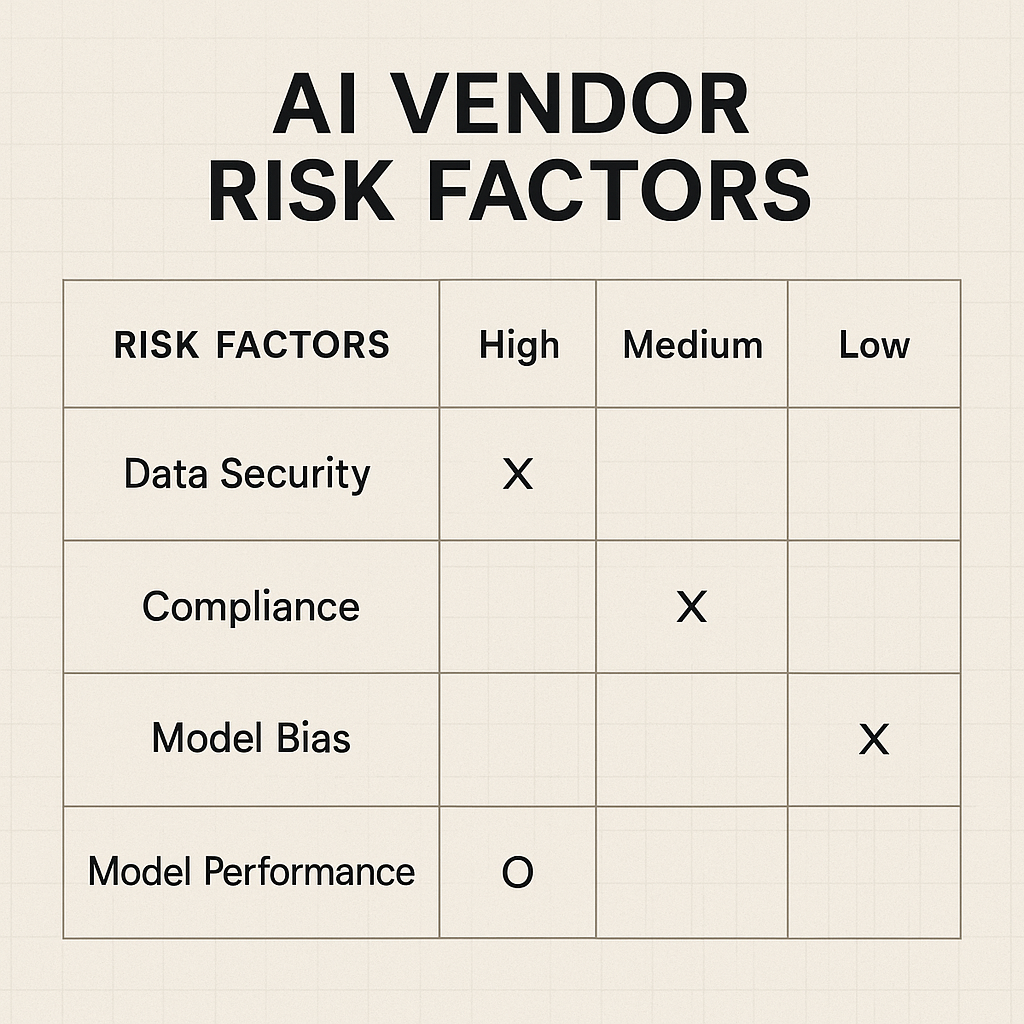

Many organizations focus on the AI model itself and miss the vendor’s data-use policy. Some vendors use customer inputs to improve their systems unless customers explicitly opt out. Others keep prompts for abuse monitoring, product debugging, or service analytics. Those practices may be reasonable, but they should be visible and controllable.

This is one of the most important parts of the review because data-use policy can change the risk profile dramatically. A tool that stores business content for later model training may create confidentiality issues even if the interface looks harmless.

| Review area | What to confirm | Why it matters |

|---|---|---|

| Training use | Whether your data is used to train models | Affects confidentiality and IP exposure |

| Retention | How long prompts and outputs are stored | Long retention increases breach and discovery risk |

| Subprocessors | Whether third parties process your data | Expands the number of entities handling sensitive data |

| Opt-out controls | Whether customers can disable data use for training or analytics | Gives the business more control over exposure |

3. Review access controls and technical safeguards

Even if the vendor has strong policies, the platform still needs technical protections. At minimum, the review should confirm encryption in transit and at rest, role-based access controls, multi-factor authentication for administrative users, and logging for sensitive actions.

If the AI tool integrates with internal systems, ask how API keys are stored, whether access scopes are limited, and whether the vendor supports single sign-on and SCIM provisioning. These controls reduce the chance that a compromised account or over-permissioned integration becomes a broader breach.

Key technical questions

- Are customer environments logically isolated?

- Is encryption standard across production data and backups?

- Can admins review user activity and audit logs?

- How are model endpoints, API keys, and service credentials protected?

4. Check compliance claims against actual requirements

Compliance certifications help, but they are not a complete security review. SOC 2, ISO 27001, GDPR readiness, HIPAA alignment, and industry-specific attestations can be useful signals. Still, the business should confirm that the vendor’s controls match its actual data handling needs.

For example, a vendor may have a compliance badge but still lack a data processing agreement, a clear retention schedule, or region-specific storage commitments. If the tool will process employee data, customer data, or regulated records, legal and security teams should review the obligations together.

It is also worth asking whether the vendor has had a recent penetration test, what issues were found, and how quickly they were remediated. A mature vendor should be able to summarize this without oversharing sensitive details.

5. Evaluate incident response, business continuity, and support

Security is not only about prevention. It is also about how the vendor responds when something fails. Your review should cover incident notification timelines, escalation paths, service availability commitments, backup and recovery practices, and support for evidence collection if an incident affects your environment.

For AI tools, continuity matters because outages can interrupt customer support, sales workflows, analytics, or internal operations. If the service is part of a critical process, the vendor should be able to explain uptime history, recovery objectives, and how service degradation is communicated.

Questions to ask:

- How quickly will the vendor notify you of a security incident?

- What is the process for account compromise or suspected misuse?

- Are backups tested, and how often?

- Is there a documented business continuity plan?

6. Put the review into contract terms

Once the technical review is complete, the findings should be reflected in the contract. This is where many AI purchases become weaker than they should be. A vendor may verbally agree to good practices, but if the agreement does not say the same thing, the business has limited recourse later.

Contract language should address data ownership, confidentiality, retention, deletion, subprocessors, breach notice, audit rights where appropriate, and restrictions on secondary use of customer data. If the vendor offers standard terms only, legal and procurement teams should decide which risks are acceptable and which are not.

Good contracts do not eliminate risk, but they make expectations enforceable. That is especially important with AI vendors whose policies may evolve quickly as products and model partnerships change.

Final checklist before approval

Before a new AI tool is approved, the business should be able to answer a few basic questions with confidence: What data will leave the organization? How will it be protected? Will it be used to train models? Who can access it? What happens if there is an incident? And what legal protections are in place if the vendor changes course?

If those answers are clear, the organization can move faster with less guesswork. If they are not, the right next step is not a blind yes or no. It is a narrower rollout, stronger controls, or a deeper review.

AI vendors are becoming central to everyday business operations. That makes vendor security review less of a compliance task and more of a standard part of responsible adoption.