Table of Contents

- Key Takeaways

- What an AI Governance Policy Actually Needs to Do

- Start with a Risk-Based Structure

- Define Roles and Decision Rights Clearly

- Set the Core Controls Before Anyone Deploys a Model

- Design an Approval Workflow That Teams Can Actually Follow

- Write the Policy in Business Language, Not Technical Jargon

- Keep Governance Alive After Launch

- Final Thoughts

AI adoption rarely fails because teams lack ideas. It usually fails because no one has defined who can approve a use case, what controls are required, or how risk should be reviewed before a model reaches production. An AI governance policy solves that problem. Done well, it gives your business a repeatable way to evaluate AI tools, protect sensitive data, and move faster without creating avoidable compliance or security gaps.

The goal is not to slow innovation. It is to make AI use predictable, auditable, and easier to scale. That means turning broad principles like “use AI responsibly” into specific rules for ownership, review, testing, escalation, and sign-off.

Key Takeaways

- An AI governance policy should define who owns AI decisions, which use cases require review, and what controls are mandatory.

- Separate low-risk AI use from high-risk use so simple experiments do not go through the same process as customer-facing or regulated workflows.

- Use a clear approval workflow with legal, security, privacy, and business input where needed.

- Document data handling, model testing, human oversight, and incident response before deployment.

- Review the policy regularly as tools, regulations, and business use cases change.

What an AI Governance Policy Actually Needs to Do

An AI governance policy is not a long statement about ethics. It is an operating document. Its job is to answer practical questions such as: Who can use AI tools? Which data can be shared with external systems? What happens if a model makes a bad recommendation? Who signs off on customer-facing use cases?

For most businesses, the policy should do five things:

- Set boundaries for acceptable AI use

- Assign ownership across business, legal, security, and IT teams

- Define required controls based on risk

- Create a standard approval workflow

- Provide a process for monitoring and updating AI systems after launch

If the policy does not help teams make decisions, it will be ignored. The best policies are short enough to use, but specific enough to enforce.

Start with a Risk-Based Structure

Not every AI use case deserves the same amount of scrutiny. A chatbot helping an internal team draft meeting notes is not the same as an AI tool that influences lending decisions, hiring, pricing, or customer support outcomes.

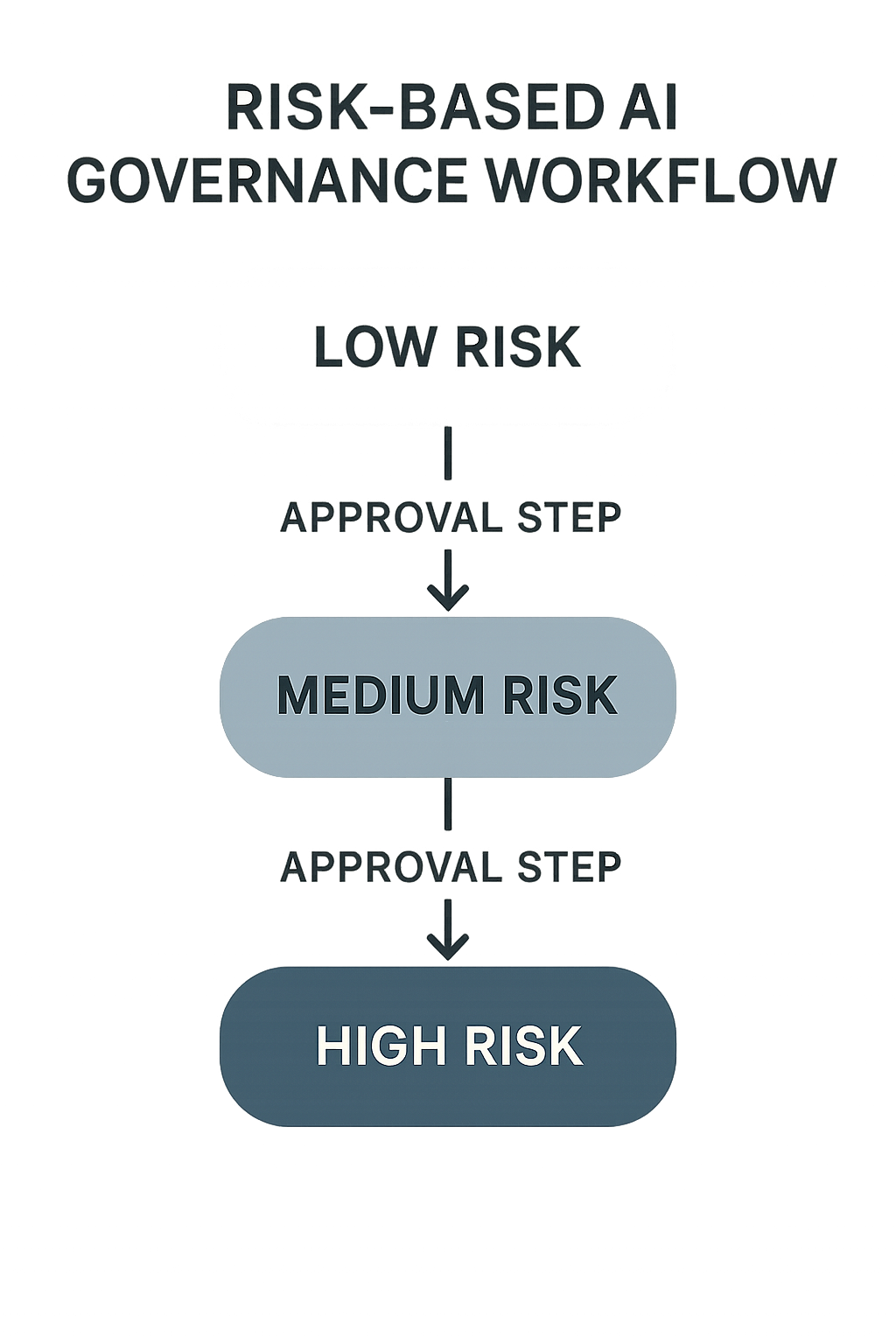

A risk-based structure keeps governance practical. It lets low-risk use cases move quickly while routing sensitive or high-impact applications through deeper review.

A simple way to classify AI use cases

| Risk level | Example use case | Typical controls | Approval path |

|---|---|---|---|

| Low | Internal drafting, summarization, brainstorming | Approved tools only, no sensitive data, user training | Manager or team lead review |

| Medium | Customer service assistance, sales enablement, internal decision support | Data review, human oversight, output testing, logging | Business owner plus security/privacy review |

| High | Hiring, credit, pricing, healthcare, legal, or regulated decisions | Formal testing, legal review, model monitoring, escalation plan, audit trail | Cross-functional approval committee |

This type of classification helps everyone understand what needs to happen before a project can launch. It also prevents governance from becoming a bottleneck for low-risk use cases.

Define Roles and Decision Rights Clearly

One of the most common governance failures is ambiguity. Business teams assume IT is reviewing the AI tool. Legal assumes privacy has signed off. Security assumes the vendor review is already complete. By the time anyone notices, the tool is already in use.

A strong policy clearly separates responsibility from approval authority. It should define who owns the use case, who reviews risk, and who has the final say.

Typical AI governance roles

- Business owner: Defines the use case, value, and expected outcome.

- AI or product owner: Coordinates implementation, documentation, and testing.

- Security team: Reviews access, vendor risk, logging, and technical safeguards.

- Legal and privacy: Evaluates regulatory, contractual, and data protection issues.

- Compliance or risk: Checks policy alignment and higher-risk use cases.

- Executive sponsor: Resolves escalations and approves material risk exceptions.

For larger organizations, a governance committee can handle high-risk cases. For smaller businesses, the same functions may be covered by a few named reviewers. What matters is that the policy spells out who does what.

Set the Core Controls Before Anyone Deploys a Model

Controls are the practical guardrails that make the policy real. They should not be limited to enterprise AI systems. Even simple third-party tools can create risk if teams paste in sensitive data or rely on unverified outputs.

At a minimum, the policy should cover these control areas:

- Data handling: What data can be used, shared, stored, or prohibited.

- Approved tools: Which vendors, models, and platforms are allowed.

- Human oversight: When a person must review, approve, or override outputs.

- Testing and validation: How teams check accuracy, bias, and failure modes.

- Logging and traceability: What gets recorded for audit and incident review.

- Access management: Who can configure models, prompts, and integrations.

For customer-facing or regulated use cases, add stronger requirements such as monitoring, rollback procedures, and documented exception handling. The more consequential the decision, the more explicit the controls should be.

Design an Approval Workflow That Teams Can Actually Follow

Approval workflows fail when they are too vague or too heavy. If the process takes three weeks for a low-risk experiment, people will work around it. If it is too light, the business absorbs the risk later.

A good approval workflow is simple, tiered, and documented. It should guide teams from idea to launch with clear checkpoints.

A practical AI approval process

- Intake: The business owner submits a short use case form describing the goal, users, data, vendor, and expected impact.

- Risk classification: The request is labeled low, medium, or high based on the business context and data sensitivity.

- Control review: Required reviewers check security, privacy, legal, and compliance conditions.

- Decision: The request is approved, approved with conditions, or escalated.

- Launch and monitoring: The team deploys the use case with logging, testing, and periodic review.

Keep the intake form short. If the form is too complex, people will skip it until they are forced to comply. A few targeted questions usually work better than a long checklist.

Write the Policy in Business Language, Not Technical Jargon

Many AI policies are hard to use because they are written like security documentation. That may satisfy a reviewer, but it does not help a product manager, marketer, or operations lead decide what to do next.

Use plain language. State what is allowed, what needs review, and what is prohibited. Include examples where helpful. For instance, explain whether teams can use public AI tools for drafting, whether customer data is ever allowed, and what counts as a regulated use case.

Your policy should also define how exceptions work. Business reality changes fast, and some projects will not fit neatly into standard rules. The exception process should be documented, time-bound, and approved by the right owner.

Keep Governance Alive After Launch

AI governance is not finished when a project goes live. In practice, most of the risk shows up later, when teams update prompts, switch vendors, expand the use case, or feed the system new data.

That is why the policy should include post-launch monitoring and periodic review. Reassess higher-risk use cases on a schedule, and review any incidents or near misses. If a model starts drifting, producing inconsistent outputs, or creating complaints, the business should have a defined response path.

It is also worth revisiting the policy itself at least quarterly or whenever major changes occur in regulation, vendor offerings, or internal usage patterns. AI governance should evolve with the business, not sit in a folder until the next audit.

Final Thoughts

A useful AI governance policy is less about control for its own sake and more about making responsible AI adoption scalable. When roles are clear, controls are risk-based, and approvals are predictable, teams can move faster with less uncertainty.

Start small, make the policy practical, and improve it as real use cases emerge. The businesses that treat governance as an operating system, not a one-time document, will be in the best position to use AI well.